I'm Karen and I am a Usability Analyst in HMRC. With something like 600 different systems on our IT estate, testing how applications work for our people who use assistive technology, for example tools like screen readers and screen magnifiers that enable them to use computers, is an important part of what we do.

Recently, our Usability Team in Telford tried a different approach and got some great results. This is my blog and I'd like to tell you more about what we did.

Limitations of remote testing

Volunteers from across HMRC who use assistive technology have always been willing to help with testing. Until recently, this involved loading the application being tested onto the individual work stations of volunteers. While this lessens disruption to the tester’s daily work routine, it has always had a big disadvantage.

It means Usability Analysts like me can only provide limited support to testers, either via the phone or email. Also, even with an average of 10 to 12 testers for each test exercise, the observations and results we obtain can sometimes be limited. Typically, any testing will cover a range of assistive technology software, including Dragon, JAWS, Read and Write Gold, Supernova and Zoomtext.

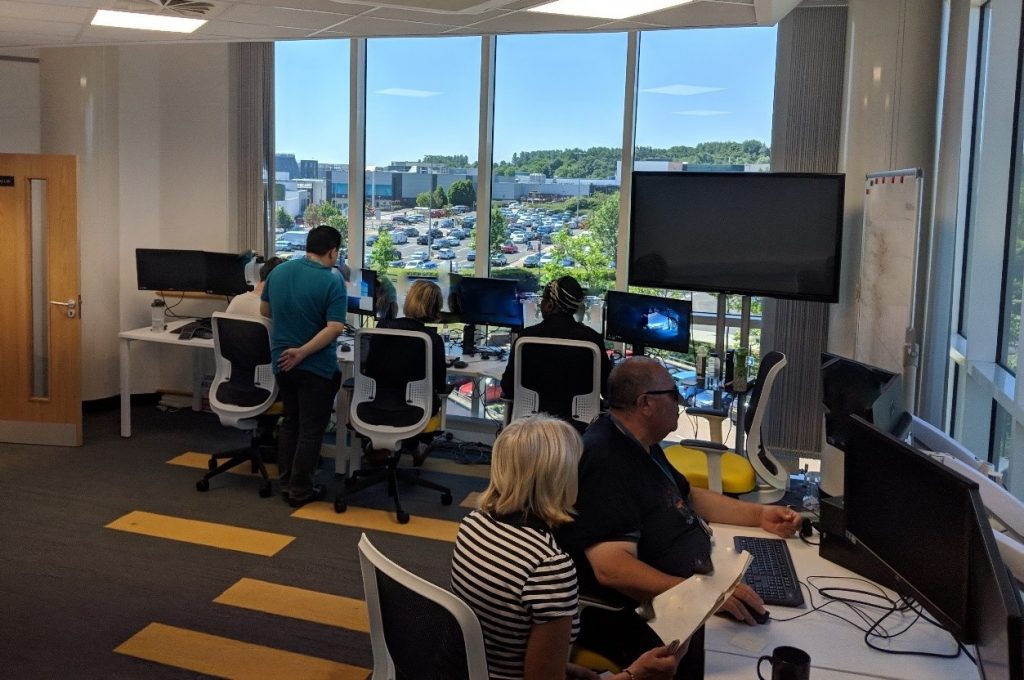

Test lab setup

This month, due to the requirements of a particular project, we decided that our test lab facilities in Telford would be a more appropriate environment to test end user accessibility. I set about inviting staff from across HMRC to attend and made the necessary arrangements in liaison with Sau Chung, a Test Manager with our managed test service.

On the day, the sun was beating down as our testers travelled from London and Liverpool to take part. Sau also attended to provide support from a project point of view. Testers were not familiar with the application being tested and had varying levels of reliance on assistive technology.

Together with my colleague Ash, I facilitated the session and ensured the atmosphere was informal and the testers felt comfortable and supported. The test lab is well-equipped and we'd pre-loaded the workstations with all the assistive tools. Dual monitors allowed the users to have the test scenario script to be opened on one, and the browser interface open on another.

Interactive and supportive

Having all the testers in a single location with the appropriate facilities allowed them to interact with each other and share ideas on how to complete each of the test scenarios. It also helped create a far more supportive environment. I think it was also more effective to provide guidance support to the group, which would be far more challenging if they were based in remote locations.

Feedback from Test Manager Sau was very positive, “I’d like to say that it was highly conducive to what we aim to achieve. Facilities were great and both Karen and Ash were great hosts for the session. This helped to settle the testers into the environment quickly and allowed them to feel at ease with their surroundings."

I can also see that this arrangement helped reduce the amount of frustration from the testers, on areas that did not operate as expected. As a result of issues that were picked up in the session, the project has some further work to do in order to fully meet the needs of HMRC accessibility users.

I feel that, collectively, we have set a very high standard for our in-house accessibility testing in HMRC, and that's something I feel very proud of. The new approach we've tried is another step forward and we are hoping to expand this to more projects in the coming weeks and months.

1 comment

Comment by Aleem Islan posted on

Interesting article. It is good to hear that HMRC is undertaking this type of activity.